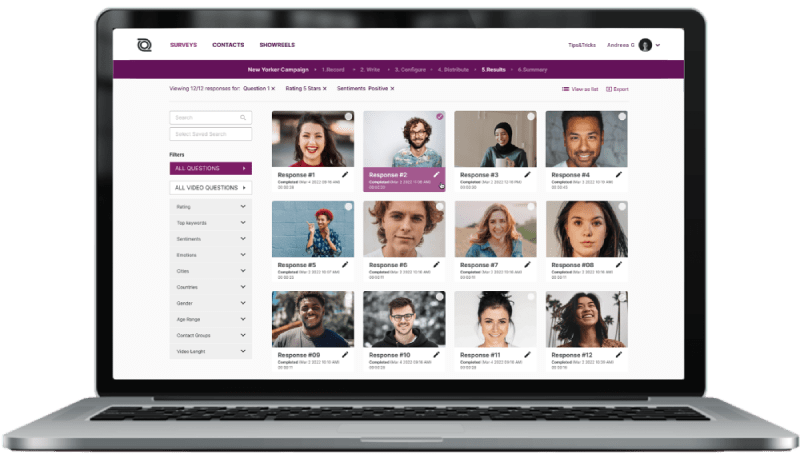

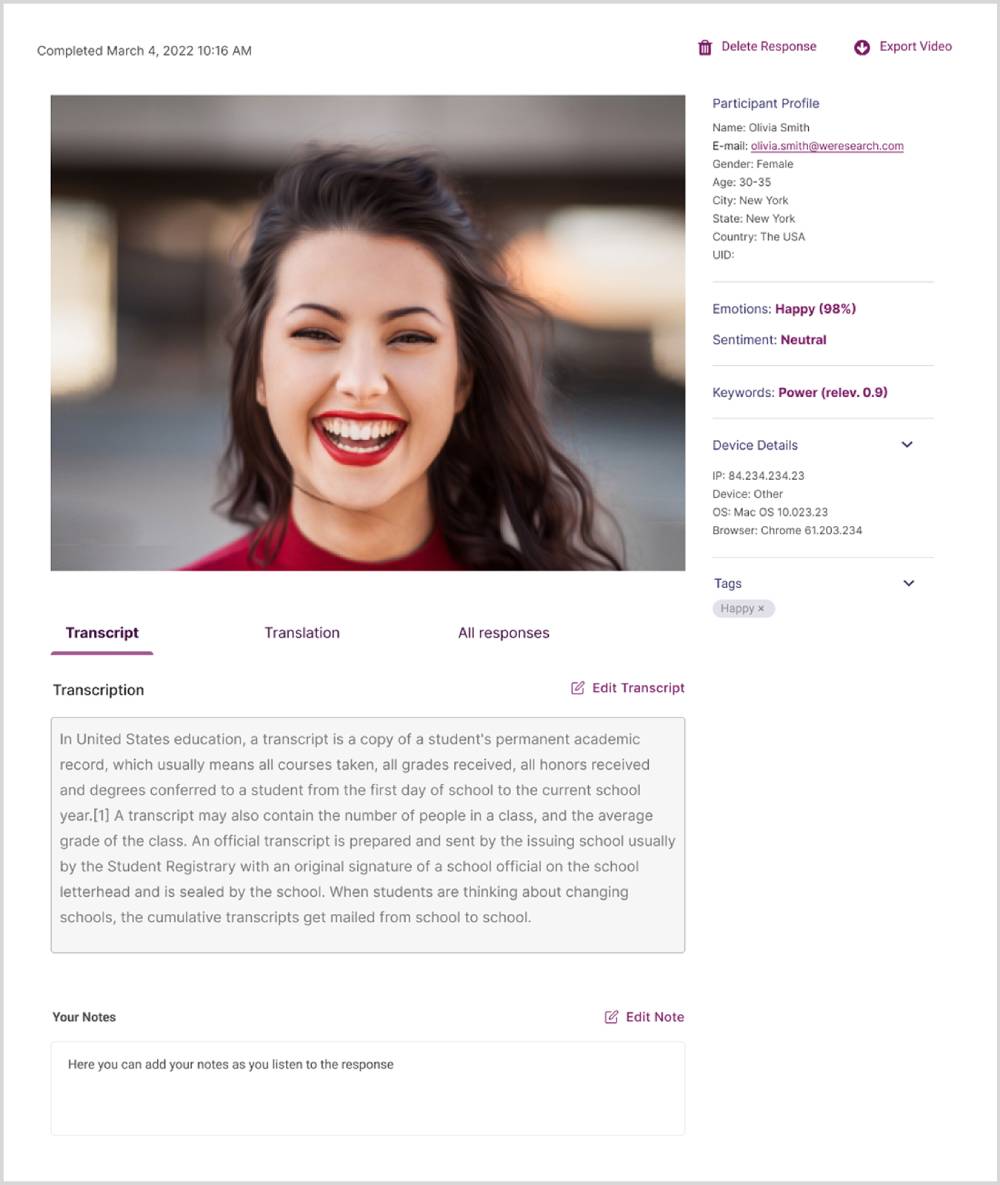

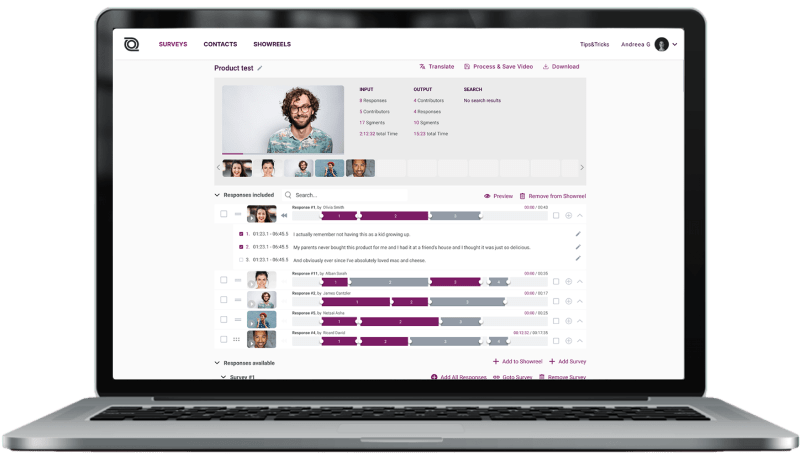

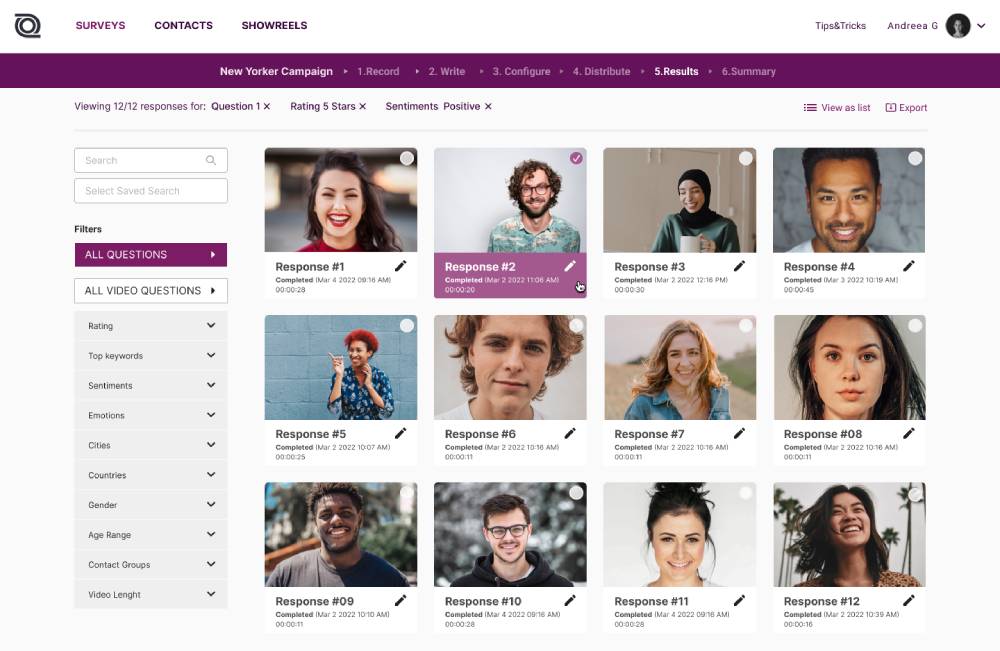

- Platform

- Methods

- Solutions

- Strategy

- Category Exploration

- Competitive Landscape

- Branding

- Consumer Segmentation

- Innovation & Renovation

- Concepts

- Packaging

- Claims

- Pricing

- Product Portfolio

- Marketing Creatives

- Advertising

- Shopper

- Shelf Optimization

- Performance Monitoring

- Better Brand Health Tracking

- Ad Tracking

- Trend Tracking

- Satisfaction Tracking

- Resources

- Demo